We had an issue recently where one of our Excel import routines was putting garbled data into the database. By “garbled” I mean the field contained a few normal words then broke into a bunch of strange characters. This Excel file was read in using an ODBC datasource.

If you are not familiar with this method of reading Excel files, here is a short summary.

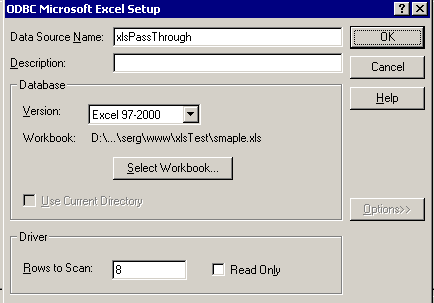

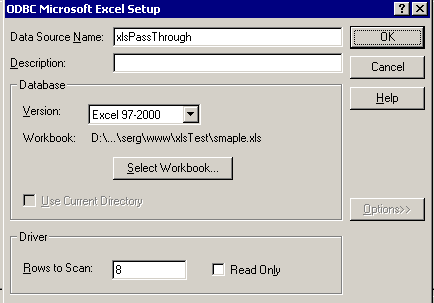

1) Create a system ODBC DSN using the MS Excel driver in your Windows ODBC control panel. Point it to an empty .xls file somewhere on your drive.

2) Create a datasource in ColdFusion (driver: “ODBC Socket”) and select your Windows ODBC DSN from the drop down.

3) Copy the XLS file you want to read on top of the empty file you setup in your ODBC DSN.

4) Use it like this in your CFML:

<cfquery name="myQuery" datasource="XLSPassThroughDSN">

SELECT *

FROM [Sheet1$]

</cfquery>

This usually works well but for some reason we were having problems. Digging through the Excel file turned up nothing out of the ordinary, except some rows (and not the ones causing problems) looked like they were double byte encoded (unicode).

Turns out this XLS driver determines the data type of each column in the spread sheet by looking through the first couple of rows and guessing a data type based on that data. In our case column B only contained values with less than 100 characters in the first few dozen rows. So the driver assumed it was a varchar type of some length. But much further down in the spread sheet, in a different row, that same column contained a value that was several hundred characters, thus overflowing the data type.

Normally this only results in truncated data. If that would have been the issue I probably would have found the fix much sooner. But instead we were ending up with garbled data, which was truncated as well but it was impossible to tell.

The fix is to adjust your registry to tell the driver to scan through more, preferably all, of the rows before guessing at a data type. In your odbc control panel you’ll notice a setting for this:

But, that doesn’t work! You need to change the registry value manually. I set mine to 1000, since that will cover the length of any of the spreadsheets we upload.

The registry keys are located at:

For Excel 97:

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Jet\3.5\Engines\Excel

For Excel 2000 and later versions:

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Jet\4.0\Engines\Excel

Here is the MS knowledge base article for more detail: http://support.microsoft.com/kb/189897/

Now the driver scans through all the rows before determining a data type, and probably uses something like a “text” type for column B. I think our data may have been getting garbled, instead of just truncated, because of the double byte data.